About Me

Hello, I’m a Senior Data Scientist at ModernaTX since 2023. I have a passion for both research and practical implementation, specializing in scalable machine learning solutions that deliver impactful results across industries.

My experience includes:

- Data Scientist at Lowe’s Home Improvement: Enhanced customer experience and optimized operations through data-driven solutions.

- Senior Data Scientist at Infor: Built predictive models and analytics tools to support enterprise decision-making.

- Senior Data Scientist at ModernaTX: Currently, I develop tools and products on the Data Science Platform team to empower cross-organizational collaboration. I focus on predictive services and chatbots for Supply Chain, Legal, and Human Resources, and automate data connections to generate business insights.

I’m deeply interested in advancements in Large Language Models (LLMs) and predictive services. My goal is to enable others by building and deploying cloud-based tools that reduce process friction and enhance collaboration among data scientists.

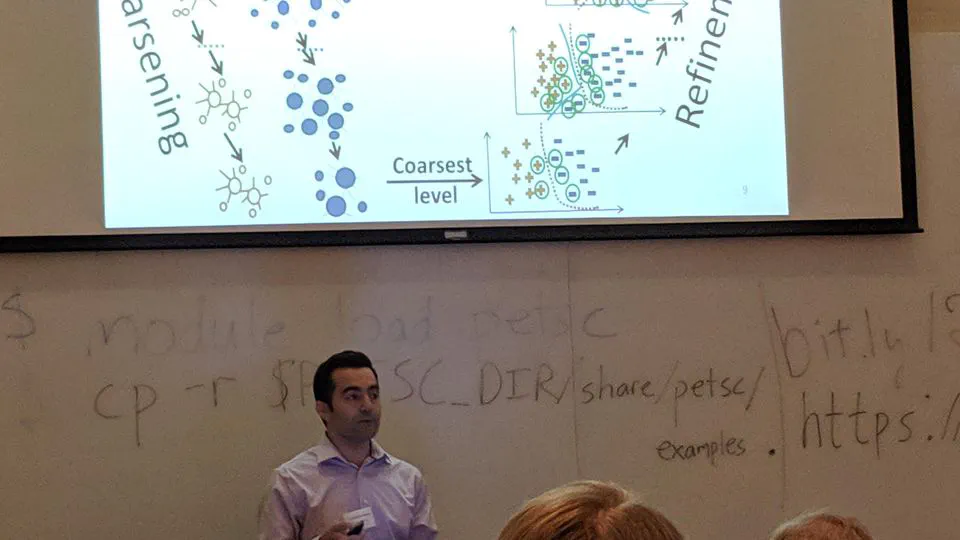

- Scalable Machine Learning

- Large Language Models

- Graph Mining

- Bioinformatics

PhD Biomedical Data Science and Informatics

Joint program from Clemson University and Medical University of South Carolina

MS Computer Science

Clemson University

BSc Software Engineering

IAU

I am a data science researcher passionate about leveraging machine learning and artificial intelligence to create impactful solutions. My work focuses on automation, system integration, and generating insights that support strategic decisions.

I am always open to new opportunities and collaborations in advanced AI and ML projects. Feel free to reach out if you’re interested.